Description

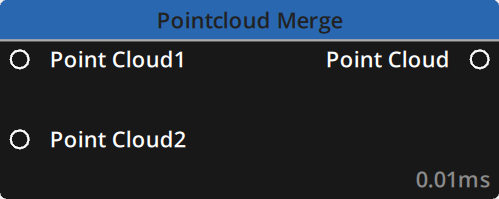

This node merges two or more Point Cloud datasets into one.

Supported file formats :

Properties

There are two tabs in the Editor panel :

Inputs

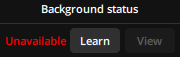

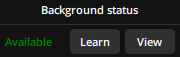

An indicator on the right of each Point Cloud input shows the merging status for those inputs :

UID: A unique identifier for each Merge Point Cloud node generated automatically when you create the node on your graph. This is useful to copy calibration data, as shown below.

Point Cloud1: This is the primary Point Cloud and it will automatically be output as soon as a valid Point Cloud dataset is received on it’s input. The indicator on the right will be green.

If the indicator is red : either nothing is connected to the input or it is an invalid point cloud format.

Point Cloud2: This is the first secondary Point Cloud and by default it will not be included in the merge until the system is properly calibrated.

Once the calibration is completed, a transformation matrix will be computed and applied for each secondary pointclouds to merge its data in alignment with the primary point cloud.

Calibration

Calibrating this node is mandatory for it to function properly.

Click the Calibrate button to begin calibration. A dialog box will open and you can either choose to calibrate From stream using the live feed of your 3D sensor or From file using a previous calibration made using the From stream option.

Calibrating from Stream :

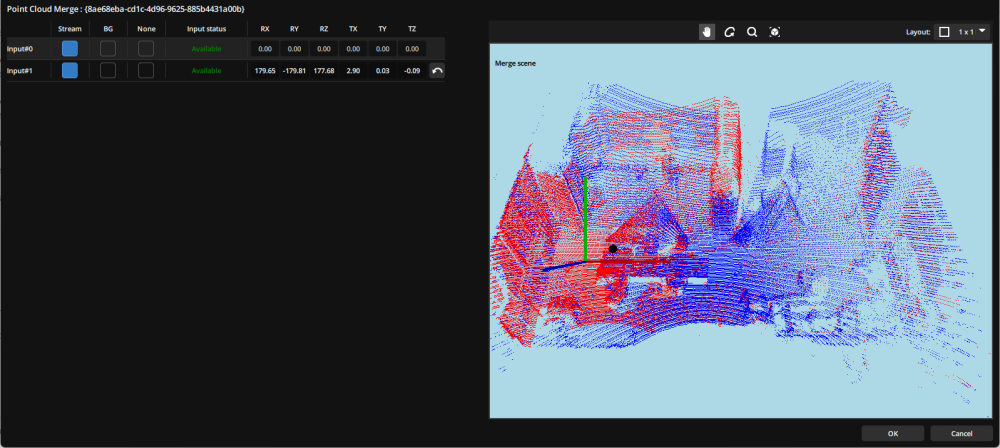

A pop-up window will appear showing the Current Configuration of the node.

To calculate the transformation matrix for each input, the node needs to know the background of your scene as detected by each 3D sensor. The current status for each background is indicated in the Current Configuration window.

Learn button enables you to launch the learning sequence of the background from the stream.

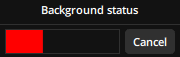

Learn button, a status bar will fill indicating the progression.

Learn button again. The View button becomes available to display the known background in the Preview section on the right.

When learning the backgrounds, please take care to avoid any movement in the tracking field to avoid errors and false detections.

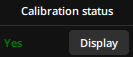

Next to the Background section, the Calibration Status section will show whether the transformation matrix has been calculated for each input :

When the calibration is computed for any input you can use the Display button to display its data in red overlayed on the primary point cloud which will always be displayed in blue.

If you would rather visualise a point cloud using another reference than the primary point cloud you can select both the target and reference point cloud by using their indexes in the top part of the interface.

To launch the calibration and compute the transformation matrix for any number of secondary point cloud inputs, once their background is known, select them and click on the Next button.

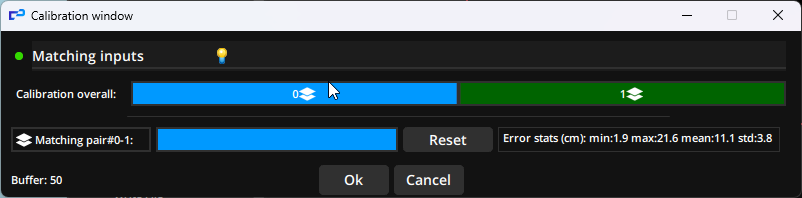

Another pop-up window will appear : the Calibration window.

For a proper calibration, take care that there is only one person or object moving in your scene during the calibration process.

A status bar indicates the progression of the calibrating procedure.

Once the calibration is complete, the Calibration window shows how many errors occured during the procedure and toggles the correctly calibrated point cloud inputs green.

You can click on any input (including the primary point cloud input) to open a pop-up window displaying its data.

Once the calibration is complete, the Refine button in the Calibration tab enables you to see the data used in the transformation matrix for each input.

You can manually refine the data and for each input toggle a live preview of either the stream data or the background.

Calibrating from File :

To recall a previous calibration using the From file option, point to the folder containing the calibration data you would like to recall. These are stored in folders bearing the names of the UID of the nodes and are located in your Show’s folder, inside the ‘.log’ subfolder.

Calibrating options :

When launching a calibration between two inputs, the node will analyze clusters of points that share a similar volume and that move between frames to produce matches. A minimum number of matches is necessary to consider the calibration complete. The following options enable you to tweak the calibration procedure.

Max processing points: The maximum number of points that will be processed in each input point clouds. If the input point cloud is composed of more than the value of this property, it will be automatically downscaled to match it.

Default : 100 000

Max points output: The maximum number of points in the point cloud that is output. Setting a value of 1000 or less will de-activate this property and there will be no limit as to how many points the output contains.

Default : 100 000

Calibration matching size: The number of matches to reach before computing the merge transformation matrix.

Default : 100

Volume similarity (%): Sets the minimum amount of correspondence in volume (automatically computed) to obtain for two clusters to be matched.

Default : 80

Min distance (cm): Sets the minimum distance (in cm) between two frames for a cluster to produce a new match between two inputs.

Default : 1

Inputs

| Name | Type | Description |

|---|---|---|

| Point Cloud1 | Point Cloud | The primary point cloud dataset for the merge |

| Point Cloud2 | Point Cloud | The first secondary point cloud dataset for the merge |

Outputs

| Name | Type | Description |

|---|---|---|

| Point Cloud | Point Cloud | The resulting Point Cloud of the merge |

Example

Need more help with this?

Don’t hesitate to contact us here.

button on the bottom left in the

button on the bottom left in the